ATT : 6 mois après sa sortie quel impact pour l’App Tracking Transparency ?

Il y a 6 mois, Apple imposait l’App Tracking Transparancy à tous les éditeurs d’applications. Une révolution en matière de publicité mobile. Nouvelle façon de tracker les utilisateurs, possibilité de ciblage restreinte sur iOS, beaucoup d’annonceurs ont pris peur sans trop savoir par quel bout commencer.

Suite à notre premier état des lieux, 1 mois après la mise en place de l’ATT, refaisons un point avec un peu plus de recul.

Tout le parc iOS a adopté iOS 14.5 ou ultérieure

Selon Branch ou Appsflyer, le taux d’adoption se situe entre 75 et 90% (en comptant iOS 15). On peut considérer que l’ensemble du parc utilise une version d’iOS qui prend en charge l’ATT. Ceux qui n’ont pas encore mis à jour leur device, le feront lors d’une future version, ne le feront jamais ou ne sont pas en capacité de le faire à cause de l’ancienneté de leur appareil.

Ayant mené des campagnes spécifiques dès la mise en place de l’ATT Framework d’Apple, on constate que 65% des budgets dédiés à iOS sur nos campagnes concernent le SKAdNetwork. Un pourcentage qui suit la tendance d’adoption.

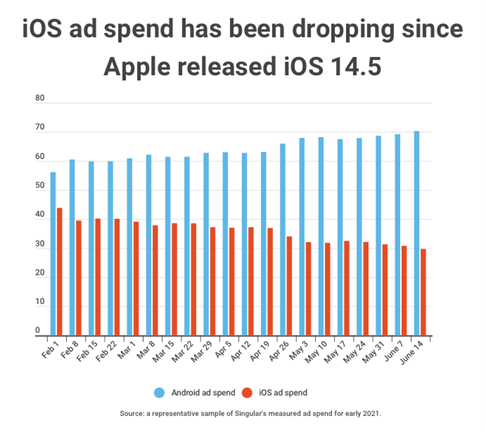

Un impact important sur les campagnes Android, une opportunité à saisir sur iOS

L’App Tracking Transparency a eu un impact sur la performance des campagnes Android car les annonceurs ont massivement transféré leur budget sur Android et ont diminué leurs dépenses sur iOS. Cette concurrence accrue a eu pour effet d’augmenter significativement le CPM sur l’OS de Google et donc de dégrader les performances générales des campagnes en contribuant à une augmentation du CPI.

Sur nos campagnes, toutes sources et tous pays confondus nous constatons un CPM Android 12% supérieur entre mai et juillet.

A contrario le CPM sur iOS diminue car le trafic SKAdNetwork est vendu moins cher par les sources et la concurrence est de moins en moins importante du fait du basculement des dépenses vers Android. De notre côté nous constatons une diminution de -47% du CPM iOS.

Basculement que l’on peut constater sur le graphique ci-dessous tiré d’un article de Singular.

Un taux d’IDFA plus important que prévu

Les premières estimations donnaient un taux d’utilisateur avec IDFA compris entre 10 et 15%. On parle ici d’opt-in d’une part du côté de l’application et d’autre part de la source qui diffuse les campagnes (double opt-in).

Cependant celui-ci serait finalement plus élevé. De notre côté chez Addict Mobile on constate plutôt des taux de double opt-in entre 25 et 40%, là où les autres acteurs comme Branch ou AppsFlyer parle d’une moyenne à 21%.

La différence entre ces taux peut sembler faible mais a pourtant un réel impact sur la mesure de performance. Avoir entre 10 et 15% d’installations supplémentaires avec un IDFA permet de s’appuyer plus facilement sur ces résultats pour faire des projections sur les performances des utilisateurs opt-out.

Choisir la bonne vue pour bien optimiser ses campagnes

Étant donné que les utilisateurs opt-in ou les utilisateurs < iOS 14 continuent de remonter sur votre outil de tracking de manière habituelle, vous avez le choix entre continuer de regarder ces performances directement sur votre MMP habituel ou sur le SKAdNetwork.

Mais comment savoir où et comment checker les performances ?

Ce choix ne s’improvise pas. Selon les mécaniques de votre app, la typologie des sources que vous activez, vos objectifs ou encore la répartition opt-in/opt-out de vos utilisateurs vous n’allez pas choisir la même source de données pour calculer vos performances. Mis à part Apple Search Ads qui fait figure de cas particulier car l’attribution est faite par l’API AdServices qui est déjà intégrée aux différents MMP.

Pour faire ce choix vous pouvez vous baser sur les indicateurs suivants :

- Part d’attribution post-impression de la source : si la part d’installations attribuée grâce au post-impression est élevée alors il vaudra mieux utiliser la donnée attribuée par le MMP car le SKAdNetwork ne capte qu’une très petite portion de ces installations (la publicité doit rester à l’écran pendant 3 secondes pour que l’impression soit comptabilisée comme telle). L’impact sur les performances de la perte du post-impression est parfois bien plus important que la perte d’une partie de l’attribution au clic.

- Taux d’IDFA :la répartition des installations avec ou sans IDFA est très importante dans le choix de la manière de regarder les performances. En effet, si votre taux d’opt-in est important vos performances n’ont pas dû varier énormément depuis la sortie de iOS 14.5+. Dans ce cas, vous pouvez continuer à vous baser sur la donnée du MMP sans prêter grande attention à celle du SKAdNetwork. Dans le cas contraire, si le nombre d’installations attribuées avec IDFA sur vos campagnes est très faible, vos KPIs principaux vont se dégrader par manque de visibilité ainsi la donnée rapportée par le SKAdNetwork aura plus de sens.

- Attention au privacy Threshold : Ce seuil d’installation minimum en dessous duquel Apple décide ne pas vous renvoyer la data des évènements générés via vos campagnes SKAdNetwork. En effet, Apple estime qu’avec seulement quelques installations par jours, l’annonceur serait en mesure d’identifier les utilisateurs en recoupant les actions faites au sein de l’app ce qui ne respecterait pas la volonté de privacy de la marque.

En fonction du volume d’installations que vous générez chaque mois, il est plus judicieux d’analyser les données de vos campagnes sur le MMP ou via le SKAdNetwork.

- Dans le cas où vous générez peu d’installations mensuelles, vous serez sans doute en dessous du privacy threshold donc :

– Pas d’évent avec le SKAdNetwork

– Le MMP remontera uniquement les évènements des utilisateurs qui ont opt-in

- Dans le cas où vous générez beaucoup d’installations par mois, vous serez au-dessus du privacy threshold donc

– Les évènements remonteront sur le SKAdNetwork pour les utilisateurs opt-in et opt-out. Vous aurez la possibilité d’optimiser uniquement via cette donnée.

– Sur le MMP : Seulement les évènements des utilisateurs opt-in donc techniquement moins de données (sauf autres facteurs qu’on cite avant et après)

Créer un modèle d’estimation des performances

L’attribution et le tracking ayant beaucoup évolué, il est important d’avoir des modèles d’attribution qui permettent d’évaluer le niveau de performance réel de vos campagnes.

Pour cela il faut procéder en deux étapes. Estimer d’une part le nombre d’installation qui n’est pas remonté par votre solution de tracking :

– Part d’utilisateur qui a opt-out et ne reremonte pas sur le MMP

– Part des installations générées en post impression qui ne remontent pas sur le SKAdNetwork

D’autre part, estimer la qualité de ces installations manquantes en calculant une LTV basée sur la donnée à votre disposition (trafic organique, installs iOS encore attribuées, donnée Android, donnée historique des deux OS, etc.).

En mixant ces 2 estimations vous pourrez estimer la valeur des installations et ainsi faire des projections plus précises quant aux performances de vos campagnes.

Il est possible d’aller encore plus loin dans la donnée utilisée en tenant compte de la saisonnalité, des disparités entre les OS, des variations d’install etc… Plus l’historique de donnée est grand plus le calcul peut être affiné.

Vous diffusez des campagnes à gros volume et vous souhaitez un coup de pouce pour maîtriser l’environnement iOS 15, n’hésitez pas à nous contacter pour un audit de vos campagnes gratuit.

ACTUALITÉS

Article en relation

User Acquisition : quand la croissance organique sur…

Dans le paysage dynamique et concurrentiel de l’acquisition d’utilisateurs pour les apps, les marketeurs sont constamment à la recherche de stratégies innovantes pour...

Publié le 10 avril 2024

DTM #9 : Les campagnes d’App install en…

User Acquisition myth #9 : Les campagnes d’App install en CTV ne me permettent pas d’acquérir des utilisateurs pour mon app de façon...

Publié le 29 mars 2024

Comment Apple Search Ads peut booster les stratégies…

Pour dynamiser les stratégies d’acquisition d’utilisateurs dans le paysage numérique, les développeurs et les marketeurs cherchent sans cesse des approches offrant des résultats...

Publié le 29 mars 2024